20022310*

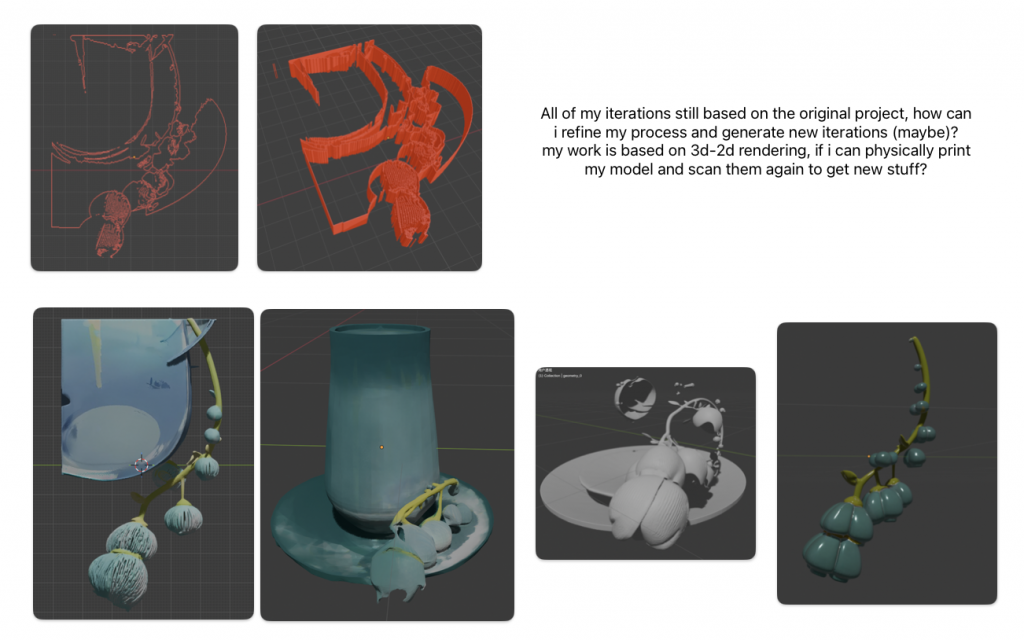

I chose Blender starting from the curiosity of 3D-to-2D outputs and how a single render can feel like a flat slice of a scene. During the copy process, I realized that I was not simply break down an object but reconstructing a view and system. After learning through implementing tutorials, I attempted to reproduce a stylized reference render as accurately as possible. Since the reference is a single cropped angle, most of my decisions were guesses: depth, scale, and rotation were repeatedly adjusted until they looked right from the front. Yet when I changed the viewpoint, the model often became uncanny, with floating connections and warped proportions, until lighting exposed these contradictions. This echoed my earlier observation that Blender’s XYZ-axis views are a kind of re-planarization: the object becomes whatever survives a chosen camera regime.

In this sense, Blender favours versionable, serviceable visibility. It produces images that can be refined, compared, and redeployed, rather than a single stable truth. My position in this process is closer to a translator or forger than an objective observer. I am not neutrally representing a real vase and flower, but re-performing an already rendered image standard, aiming for a relative neutrality limited by my technical skill and the reference’s demands. Form becomes a communication strategy: camera, composition, lighting, and render settings organize hierarchy and mood as much as geometry does.

What counts as accurate or neutral representation in a tool built on camera, perspective, and industry defaults? Am I modelling an object, or modelling a standard of visibility, what is allowed to be seen and what can be ignored?

Berger’s Ways of Seeing provided the theoretical support: reproduced images are mobile, recontextualized, and their meaning shifts with creators, audience, and settings. The reference therefore carries a particular way of seeing, and my copy inevitably translates it rather than reproducing it neutrally. Jencks and Silver’s Adhocism further clarified my method: rather than building an ideal system, I worked as a bricoleur under constraint, improvising with available tools and resources to meet a specific visual purpose (Jencks and Silver, 2013, pp. 15–16). This legitimized purposeful trial-and-error as method rather than failure.

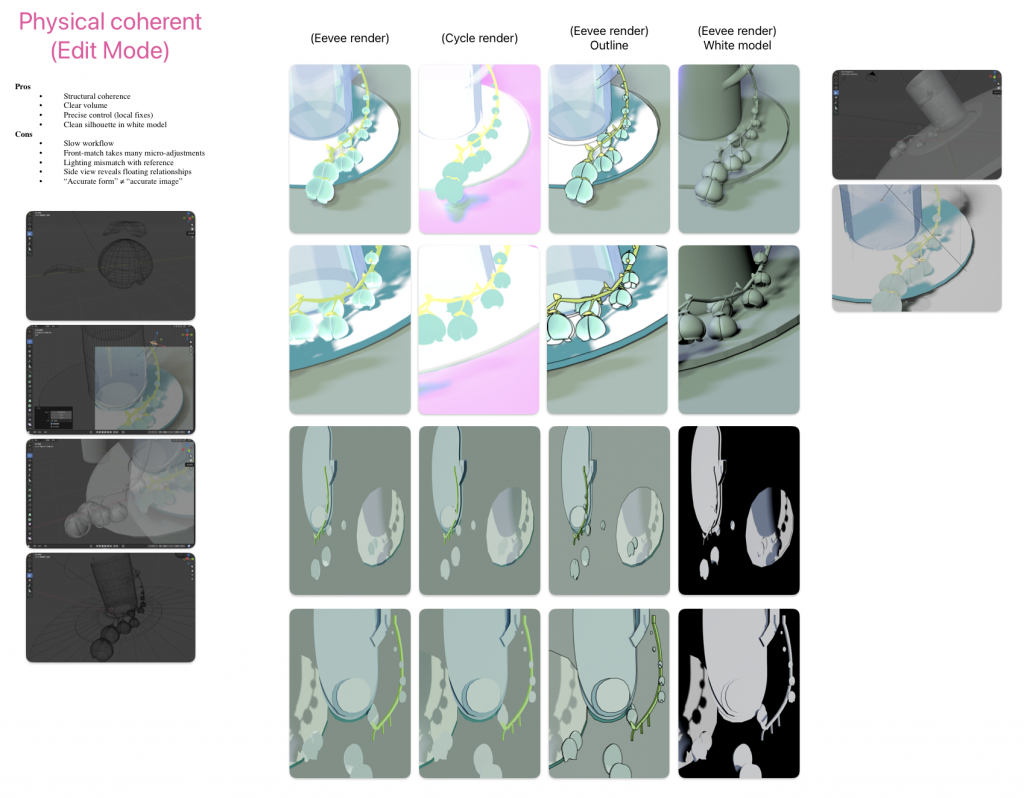

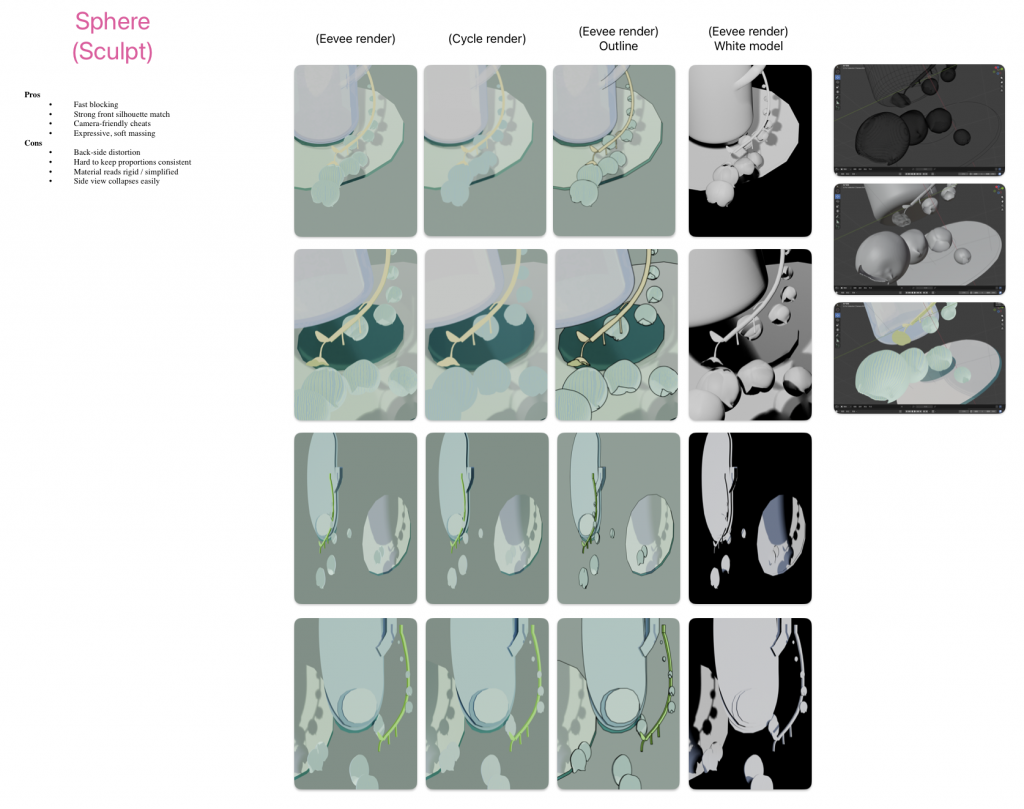

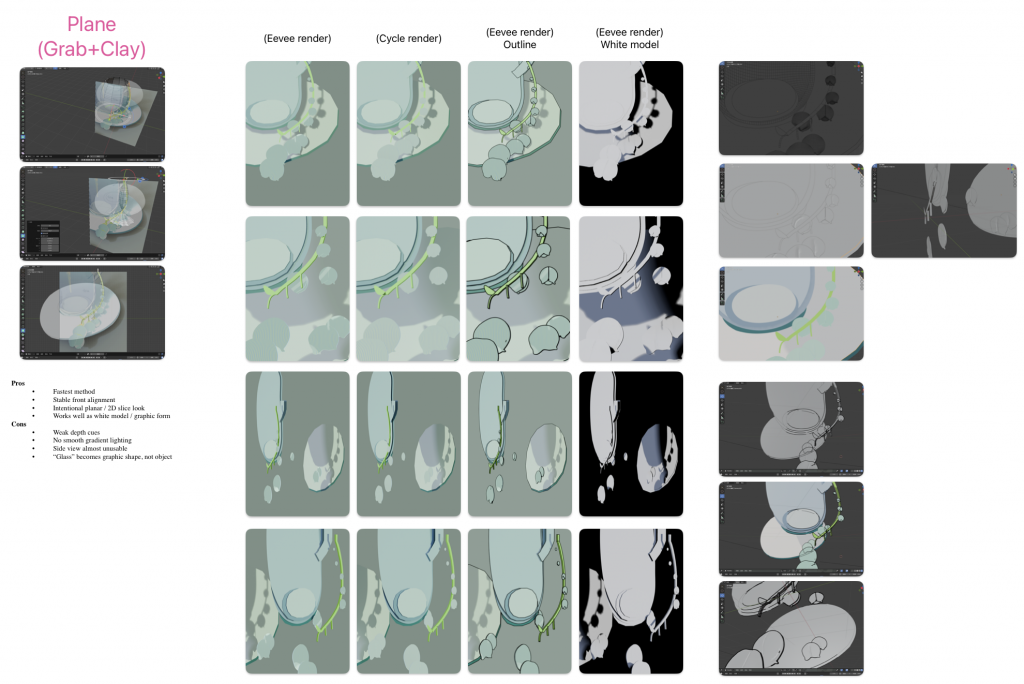

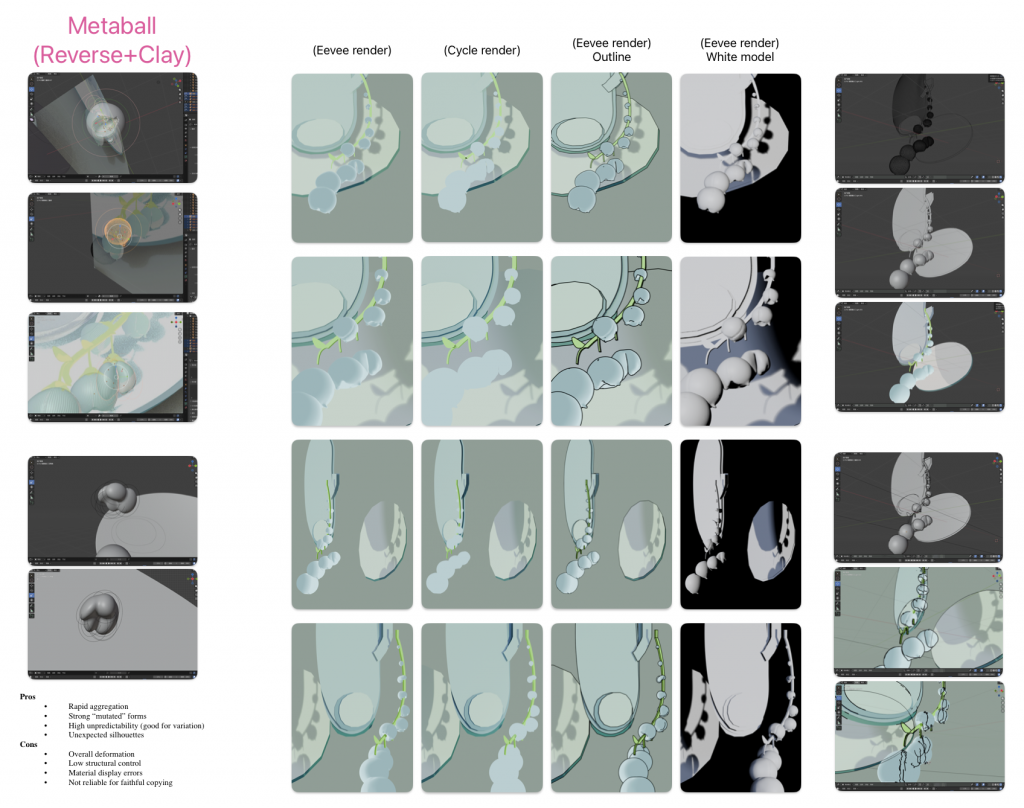

Following this lens, I shifted from chasing one perfect copy to an iterative experiment that “hacked” Blender from image-making into a cataloguing instrument. Initially, I built four parallel versions of the same scene through contrasting modelling strategies: physically coherent edit-mode modelling, sphere sculpting, plane-based flat modelling, and metaball fusion. I kept materials consistent, then generated comparative specimens from fixed camera viewpoints using three display modes: classic render, outline, and white model. This produced a grid of evidence where front fidelity could be separated from spatial plausibility.

Secondly, I pushed the enquiry into the viewpoint itself. For each of the four “neutral” reconstructions, I rendered a 26-camera array derived from expanded six-view logic (front/side/top variants and diagonals), then re-imported the 26 images back into Blender to rebuild a spherical “view object” and exported its 26 views again. In parallel, I decomposed the scene into a single-object sequence on an axis and rendered the same 26-view set. Across modelling types and render modes, the object progressively degraded into its viewing regime: what remained consistent was not the vase, but the rules that declared it visible. Inspired by Warburg’s Mnemosyne Atlas and Bernd and Hilla Becher’s typologies, I present these outputs as maps and adjacencies: slices of evidence that argue neutrality is less about the object’s truth than about the camera-standard that makes an image appear correct.

References

Jencks, C. and Silver, N. (2013) Adhocism: The Case for Improvisation. Cambridge, MA: The MIT Press. (First published 1972).

Ways of Seeing (1972) Ways of Seeing. Available at: https://www.ways-of-seeing.com/ (Accessed: 28 January 2026).

Draft 3 Experiment

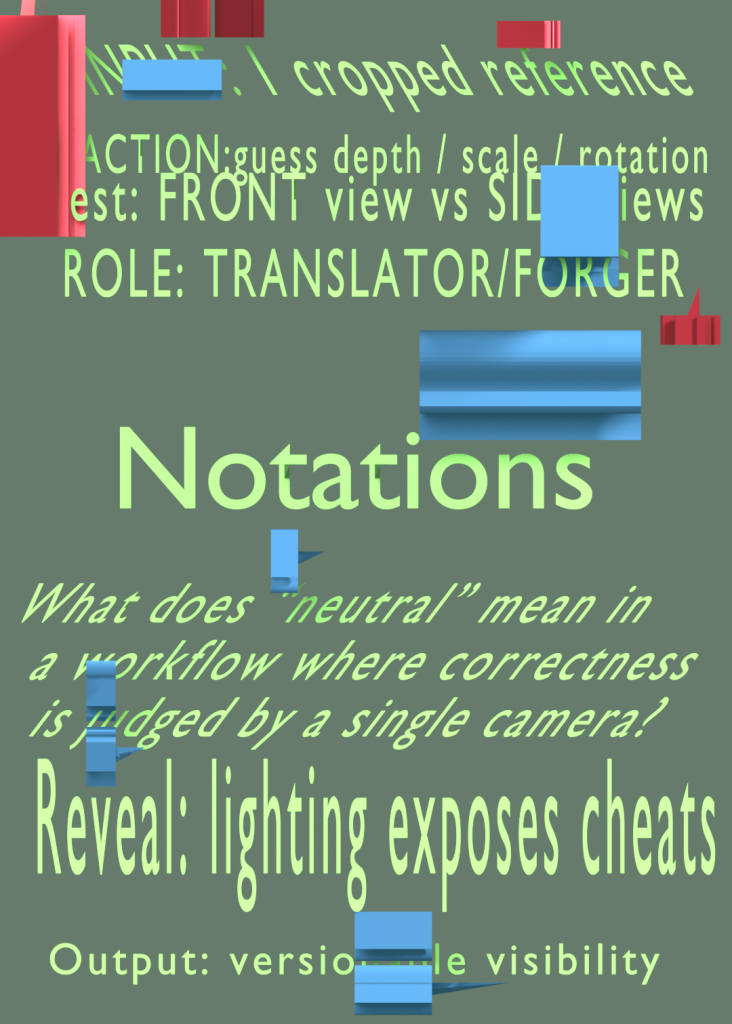

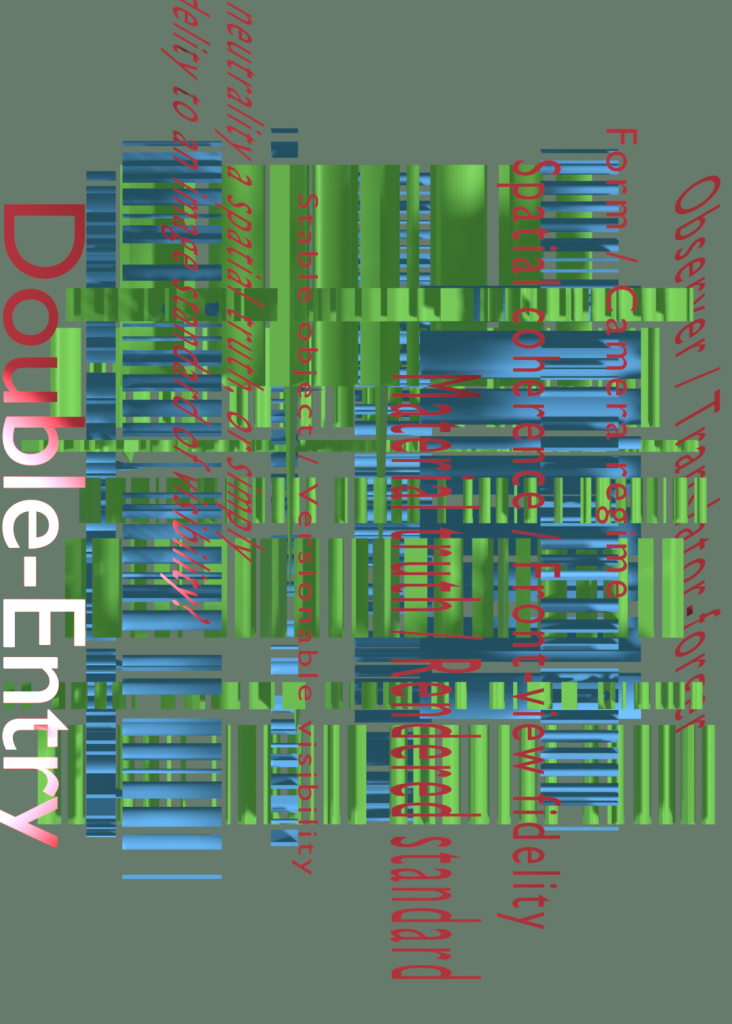

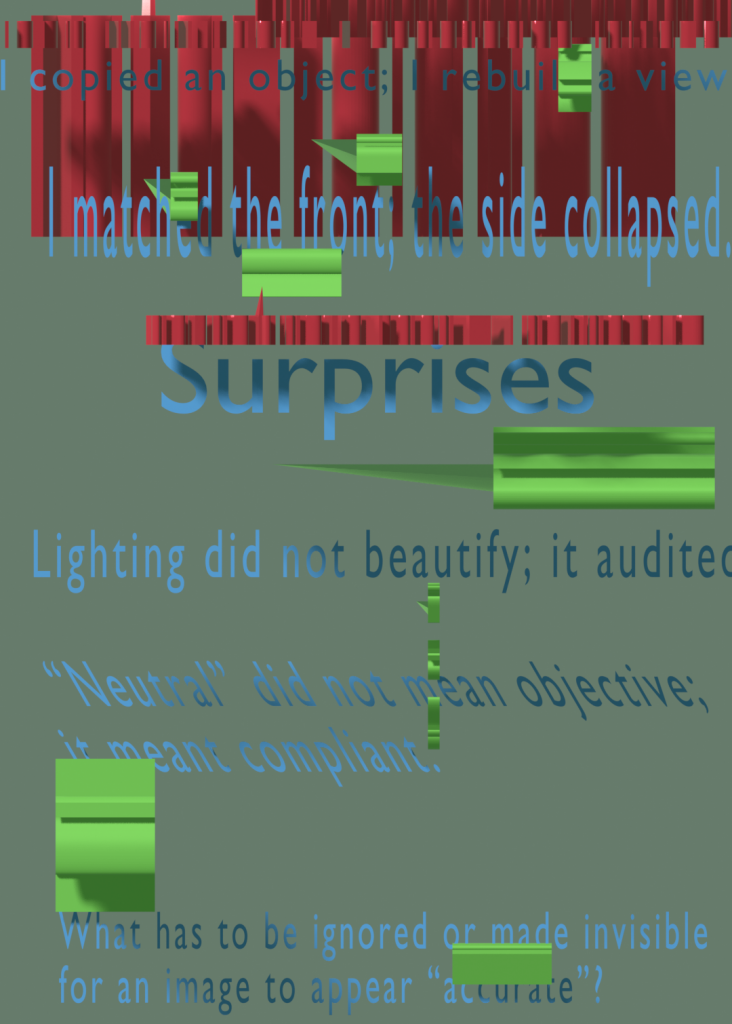

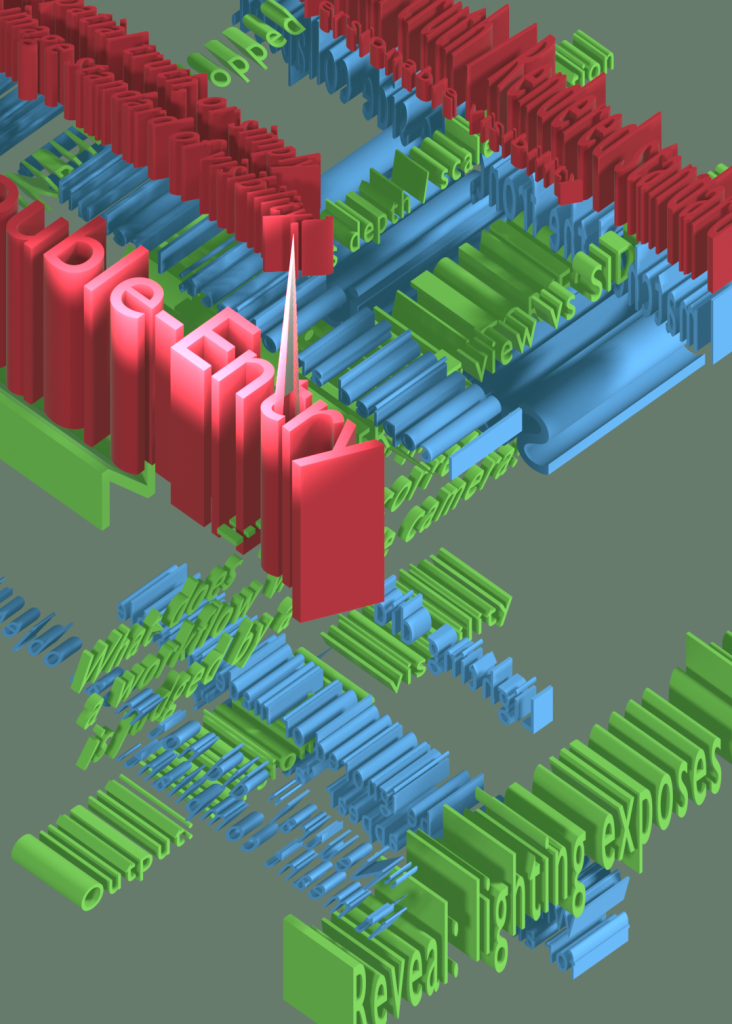

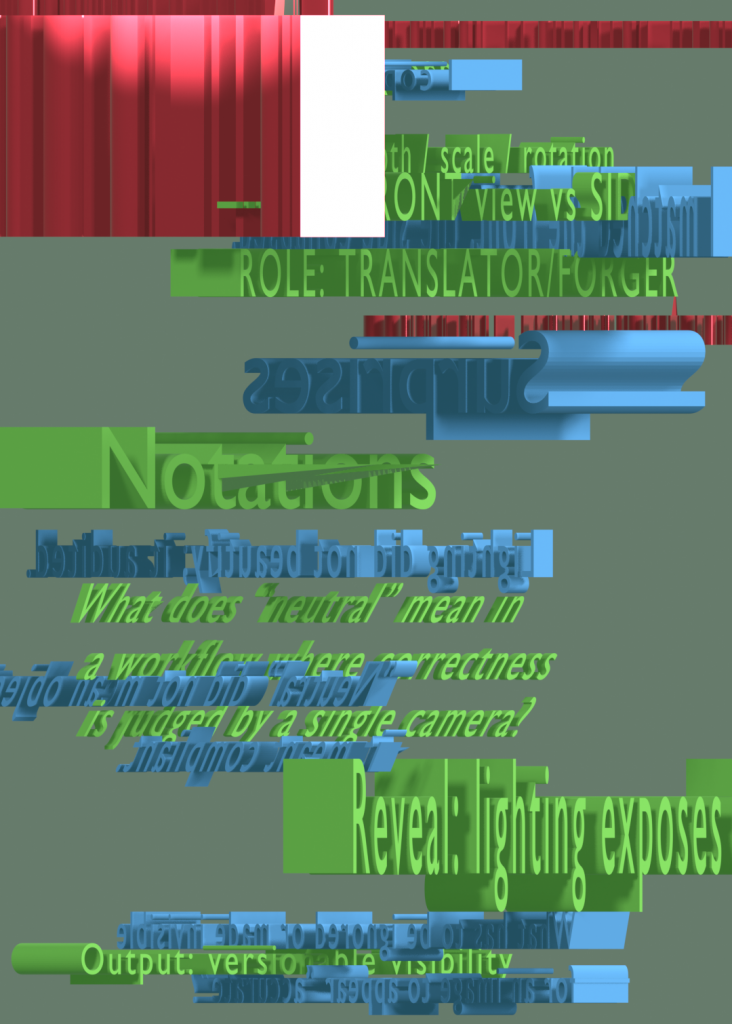

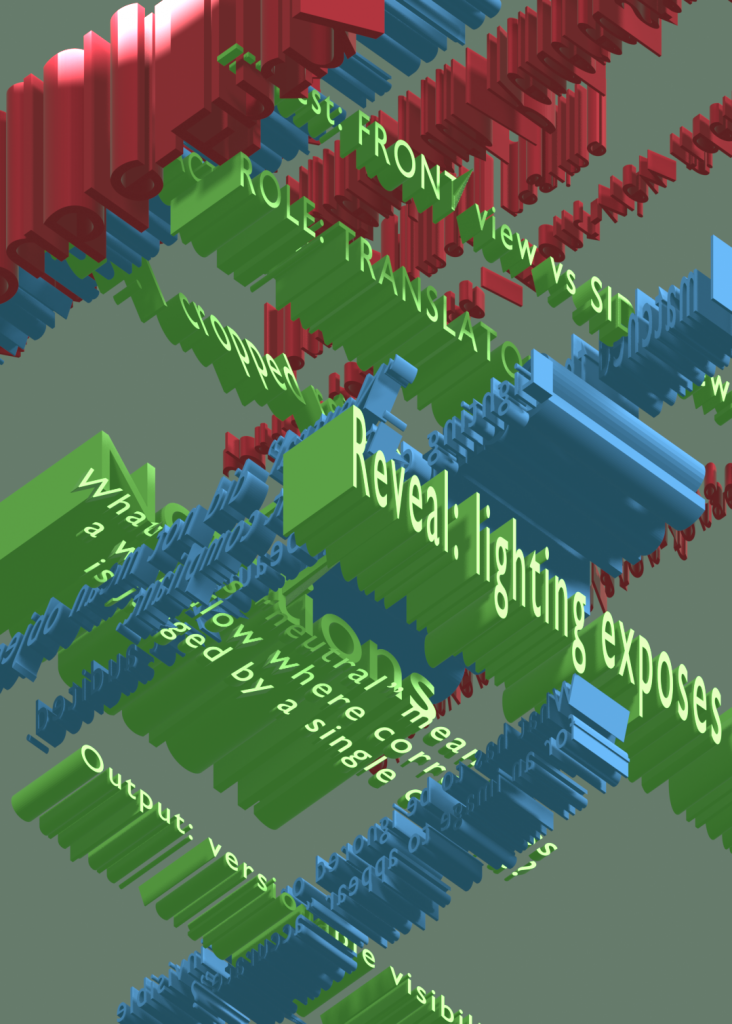

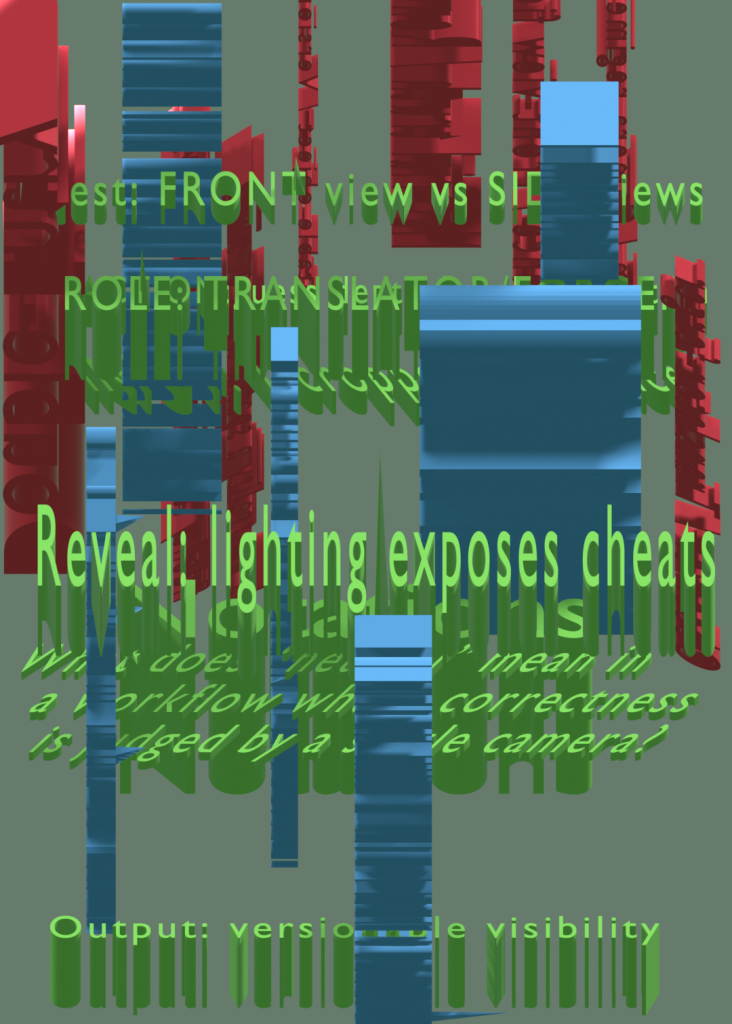

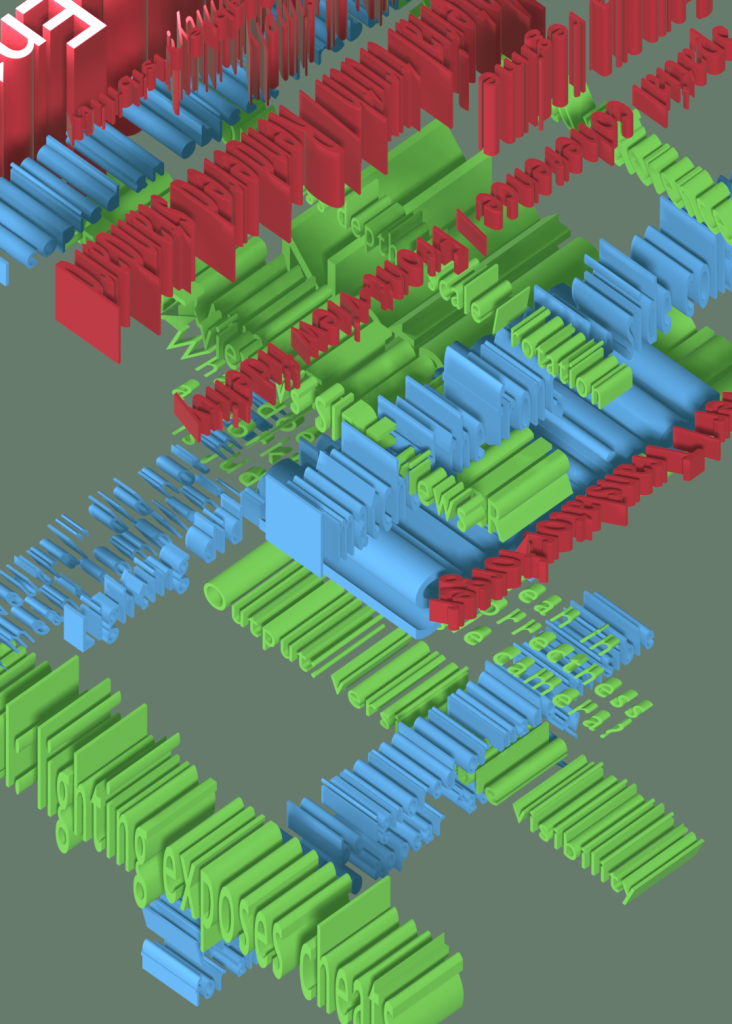

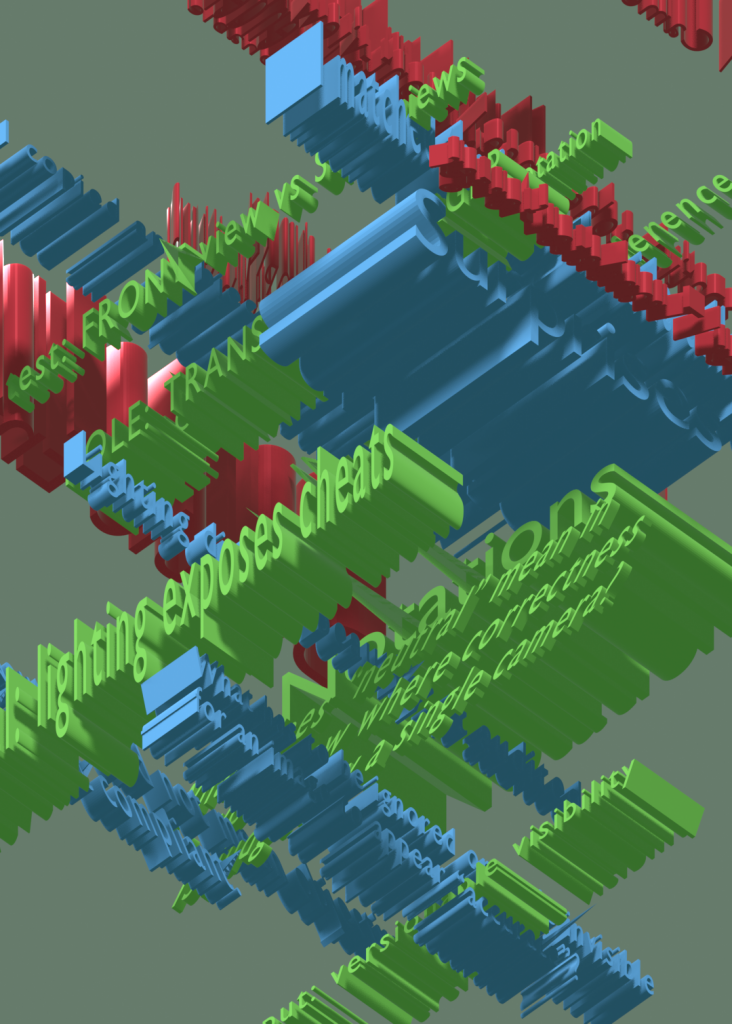

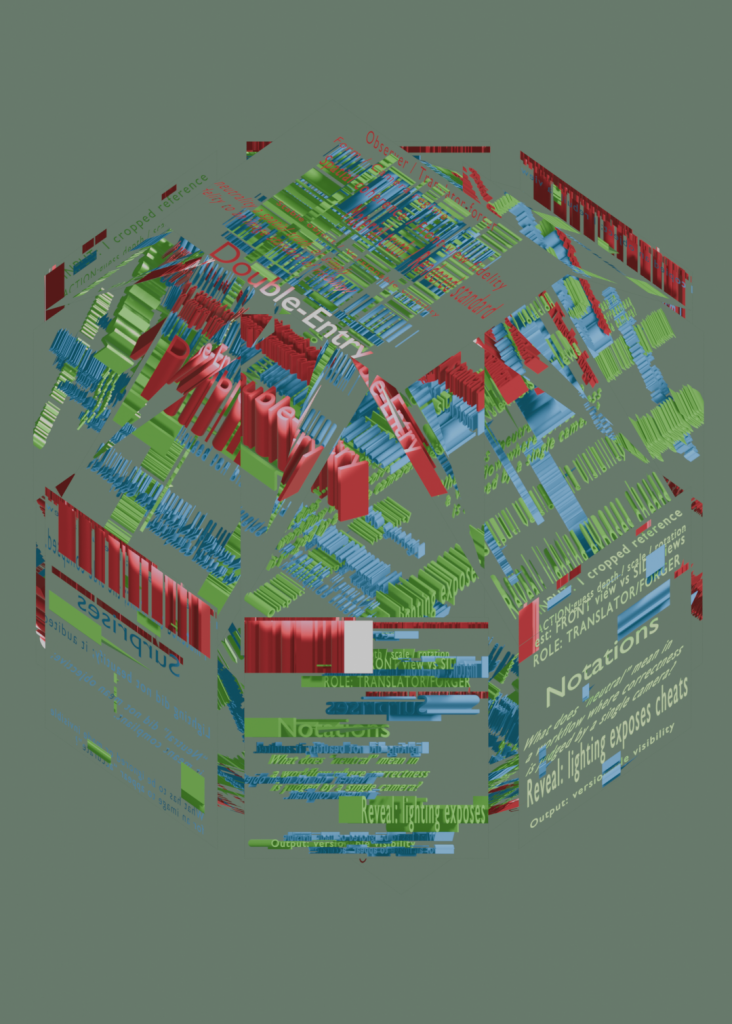

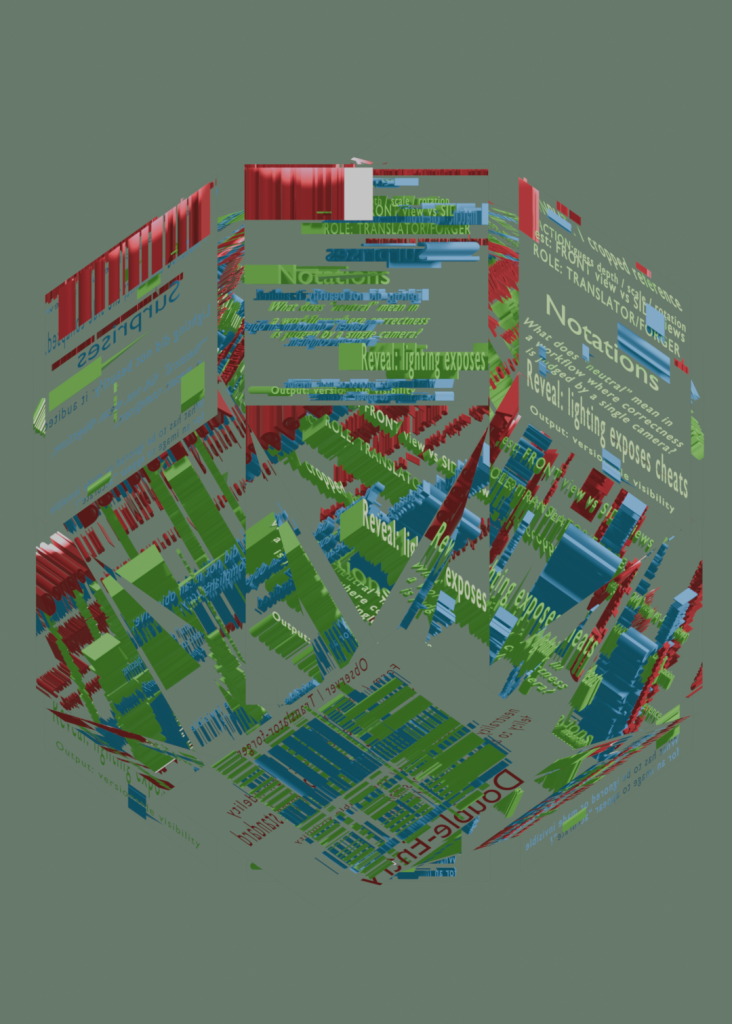

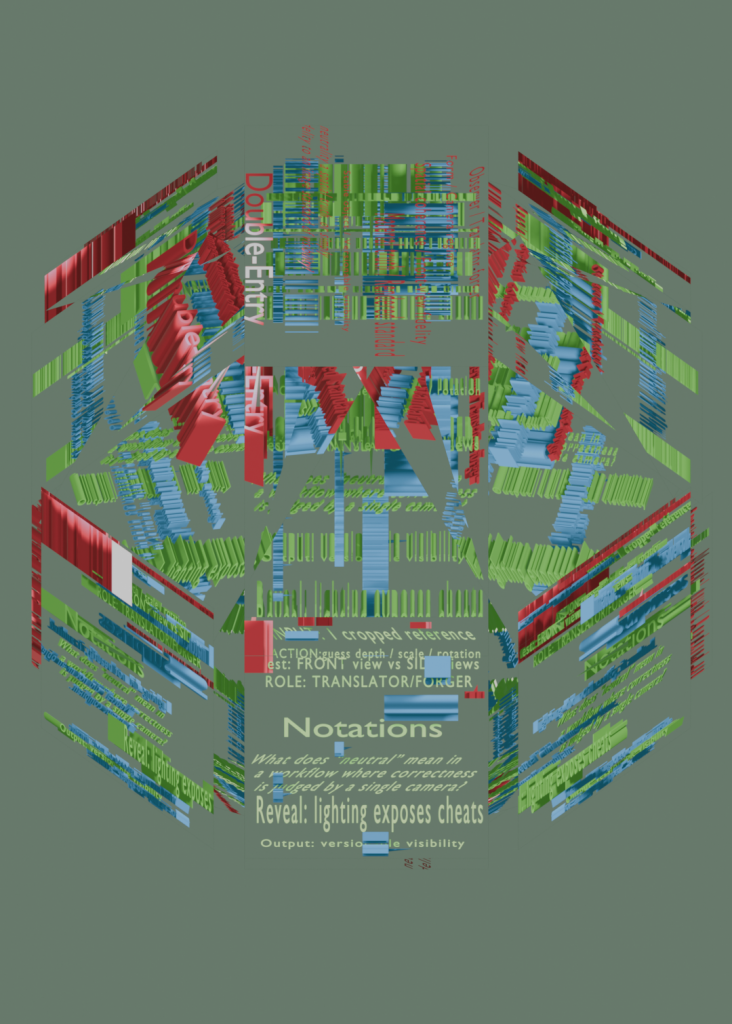

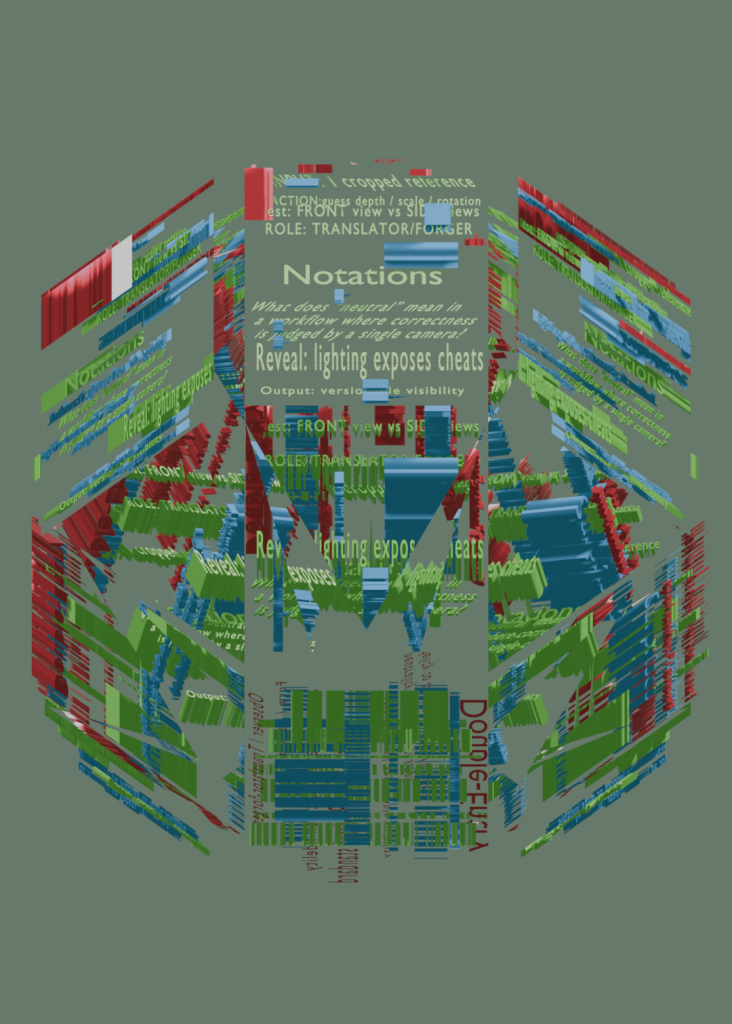

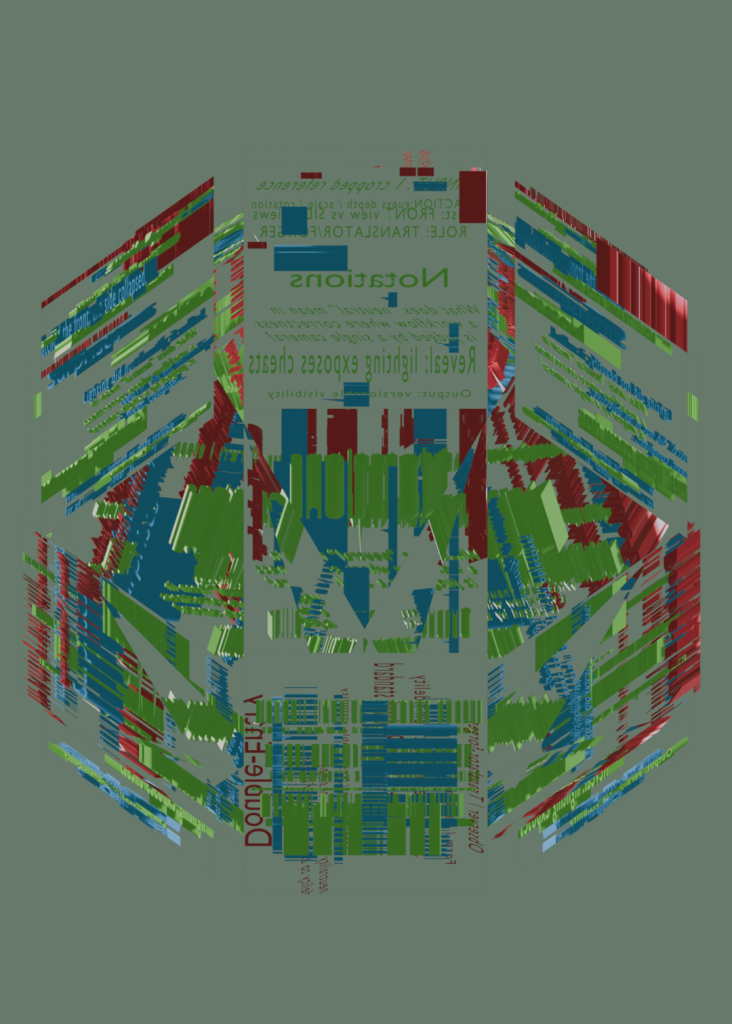

Inspired by Exercises in Style, I tried to rewrite my gains by Notations, Double-Entry, Surprises. Then I put the text into my iterative system with varied thicknesses and positions, each serving a different view (e.g., notations for the front view, double-entry for the top view). I did not format the text, but subjected it to the same viewing regime as the object. The texts were mixed and glitched gradually, and the viewing system is constantly being deconstructed, proofed and regenerated during the process.

(Notation)

Input: one cropped reference

Action: guess depth / scale / rotation

Test: front view vs side views

Reveal: lighting exposes cheats

Output: versionable visibility

Role: translator/forger

What does “neutral” mean in a workflow where correctness is judged by a single camera?

–

(Double-Entry)

Form / Camera regime

Spatial coherence / Front-view fidelity

Material truth / Rendered standard

Stable object / Versionable visibility

Observer / Translator-forger

Is neutrality a spatial truth, or simply fidelity to an image standard of visibility?

–

(Surprises)

I copied an object; I rebuilt a view.

I matched the front; the side collapsed.

Lighting did not beautify; it audited.

“Neutral” did not mean objective; it meant compliant.

What has to be ignored or made invisible for an image to appear “accurate”?

I chose Blender starting from the curiousity of 3D-to-2D outputs and how a single render can feel like a flat slice of a scene. During the copy process, I realised I was not simply reconstructing an object but reconstructing a view and system. Since the reference is a single cropped angle, most of my decisions were guesses: depth, scale, and rotation were repeatedly adjusted until they looked right from the front. Yet when I changed the viewpoint, the model often became uncanny, with floating connections and warped proportions, until lighting exposed these contradictions. This echoed my earlier observation that Blender’s XYZ views are a kind of re-planarisation: the object becomes whatever survives a chosen camera regime.

In this sense, Blender favours versionable, serviceable visibility. It produces images that can be refined, compared, and redeployed, rather than a single stable truth. My position in this process is closer to a translator or forger than an objective observer. I am not neutrally representing a real vase and flower; I am re-performing an already rendered image standard, aiming for a relative neutrality limited by my technical skill and the reference’s demands. Form becomes a communication strategy: camera, composition, lighting, and render settings organise hierarchy and mood as much as geometry does.

What counts as accurate or neutral representation in a tool built on camera, perspective, and industry defaults? Am I modelling an object, or modelling a standard of visibility, what is allowed to be seen and what can be ignored?

Berger’s Ways of Seeing provided the theoritical support. A reproduced image is mobile and recontextualised, and its meaning shifts with the maker, the viewer, and the setting (Berger, 1972). The reference image therefore carries a particular way of seeing, and my copy inevitably translates it rather than reproducing it neutrally.

Reading Jencks and Silver’s Adhocism further clarified my method. It framed my role as a bricoleur working under constraint, improvising with available tools, tutorials, and shared resources to rapidly meet a specific visual purpose (Jencks and Silver, 2013, pp. 15). Surrealism, through its fusion of common objects to create heterogeneity, has gradually been recontextualized by time and culture into an improvisational exploration of non-temporary works—a highly enlightening endeavor(Jencks and Silver, 2013, p. xix). Instead of chasing procedural correctness or a perfect system, the work progressed through purposeful trial and error,and let ad-hoc networks replace hierarchical structures to produce impromptu slices(Jencks and Silver, 2013, pp. 20). The most productive moments often came from imprecision and leftovers: mismatched joins, unstable depth, and other unseen problems became clues about how the reference’s visibility standard was constructed (Jencks and Silver, 2013, pp. 16).

Following this lens, I shifted from chasing one perfect copy to an iterative experiment. I rebuilt the same scene through contrasting modelling strategies, spatially coherent modelling versus camera-first modelling that sacrifices unseen sides. Keeping materials constant, I tested each version through small camera shifts and different viewport or render modes. These comparative slices made neutrality measurable: fidelity holds only within a chosen viewing standard, and collapses as soon as that standard changes.

Reference

Jencks, C. and Silver, N. (2013) Adhocism: The Case for Improvisation. Cambridge, MA: The MIT Press. (First published 1972).

Ways of Seeing (1972) Ways of Seeing. Available at: https://www.ways-of-seeing.com/ (Accessed: 28 January 2026).

I chose Blender mainly to explore how 3D-to-2D rendering turns “making an image” into designing a viewing system. During the copy process, I realised I was not simply reconstructing the image but a view. Since the reference is a single cropped angle, most of my decisions were guesses: depth, scale, and rotation were repeatedly adjusted until they “looked right” from the front. Yet when I changed the viewpoint, the model often became uncanny—floating connections, warped proportions—until lighting exposed these contradictions. This echoed my earlier observation that Blender’s XYZ views are a kind of re-planarisation (week 1): the object becomes whatever survives a chosen camera regime.

What counts as “accurate” or “neutral” representation in a tool built on camera, perspective, and industry defaults?

Am I modelling an object, or modelling a standard of visibility—what is allowed to be seen and what can be ignored?

In 3D-to-2D rendering, outlines behaved like algorithmic borders: stable when the camera moved, but broken when I rotated in the viewport, suggesting the “edge” is not the object’s truth but a rule-based decision. Materials amplified this: changing nodes, light, and motion produced entirely different moods from the same geometry. The “materiality” felt less virtual than simulated—a parameter system that generates narrative.

Next, I will iterate systematically rather than chase one perfect copy. I will fix the model and camera, then produce more versions by changing only material-node variables (roughness, transmission, refraction, rim highlight, outline thresholds) and render passes (beauty vs freestyle). Each version will be logged with a short note on what changed, what surprised me, and what the tool seems to favour—so the process itself becomes the outcome.