20022310*

My previous understanding about the brief:

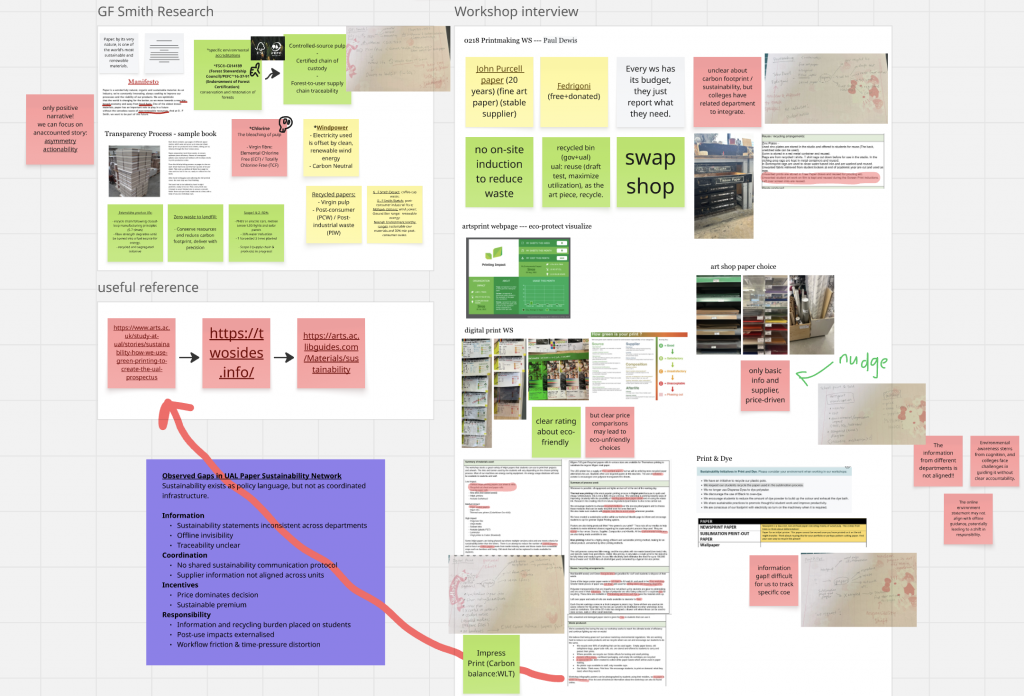

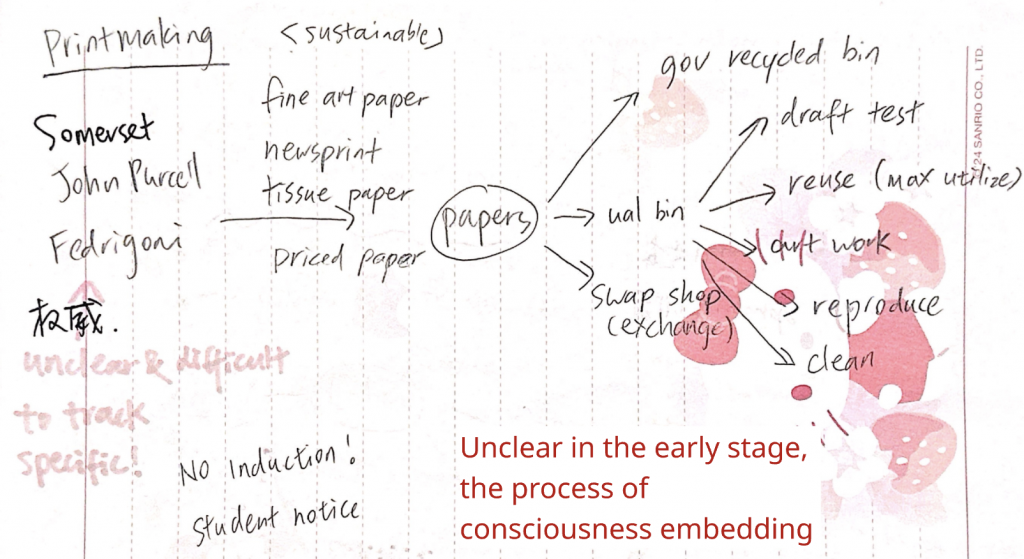

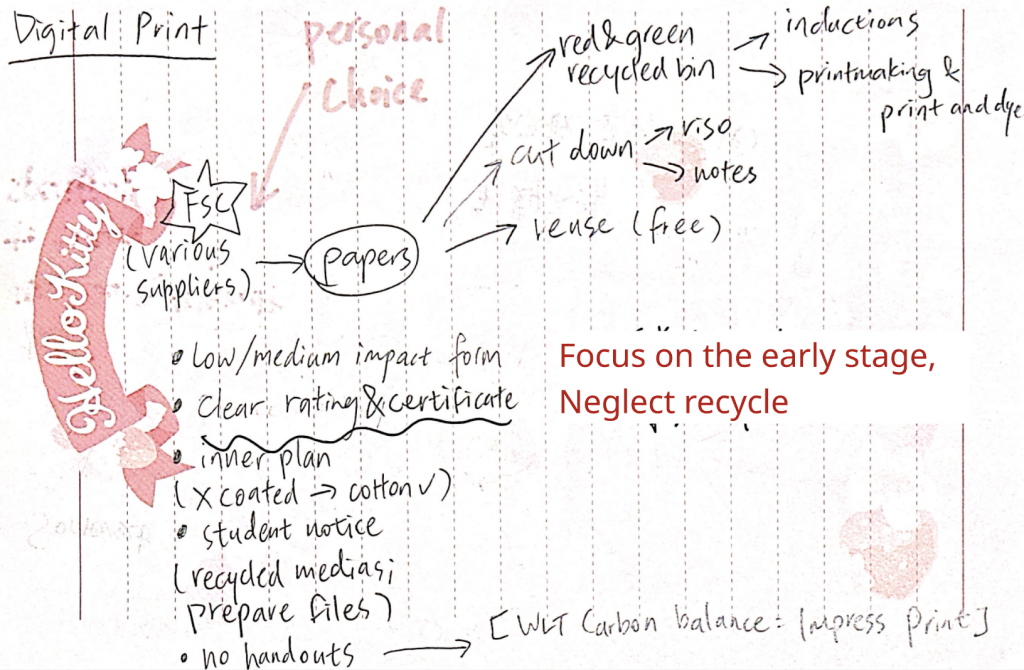

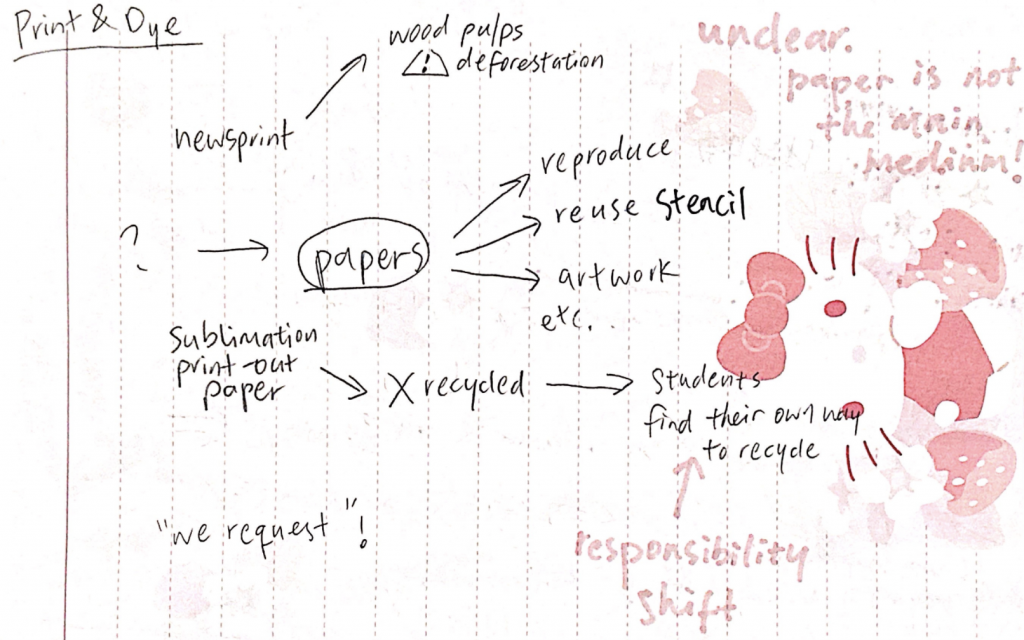

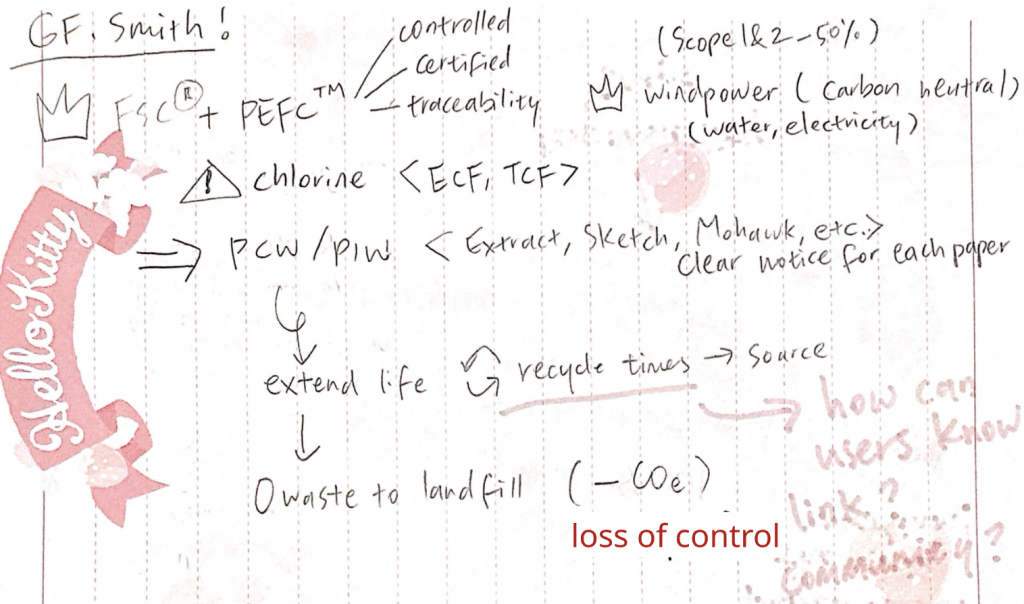

In Week 1 I was in charge of all the workshop research except for publication.

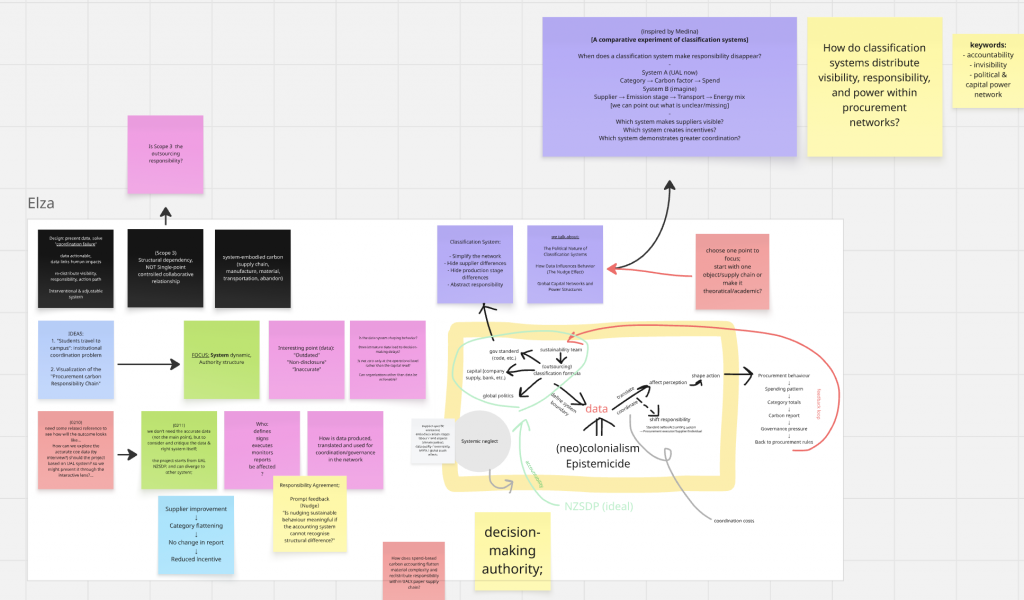

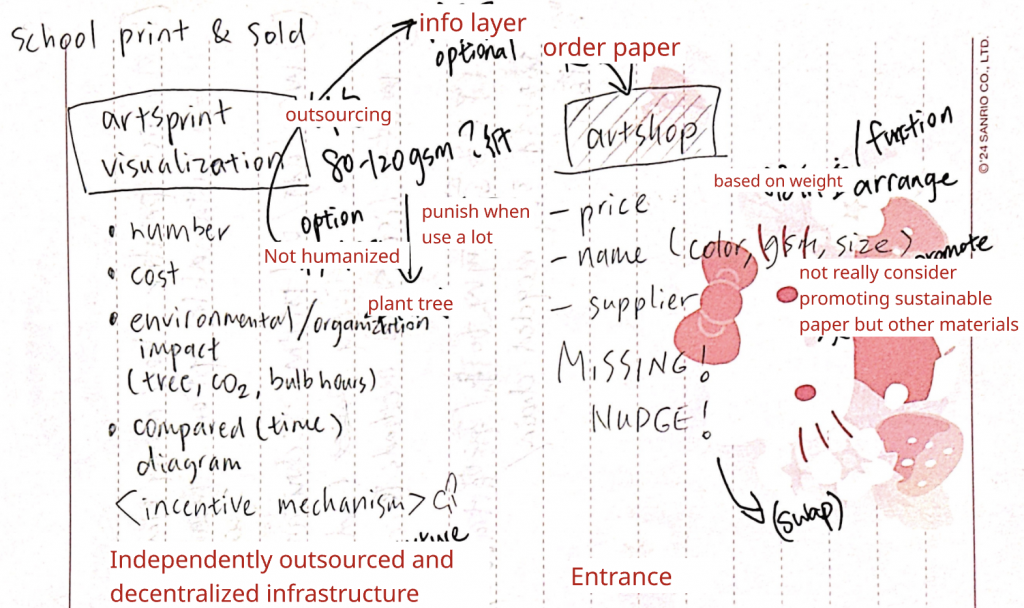

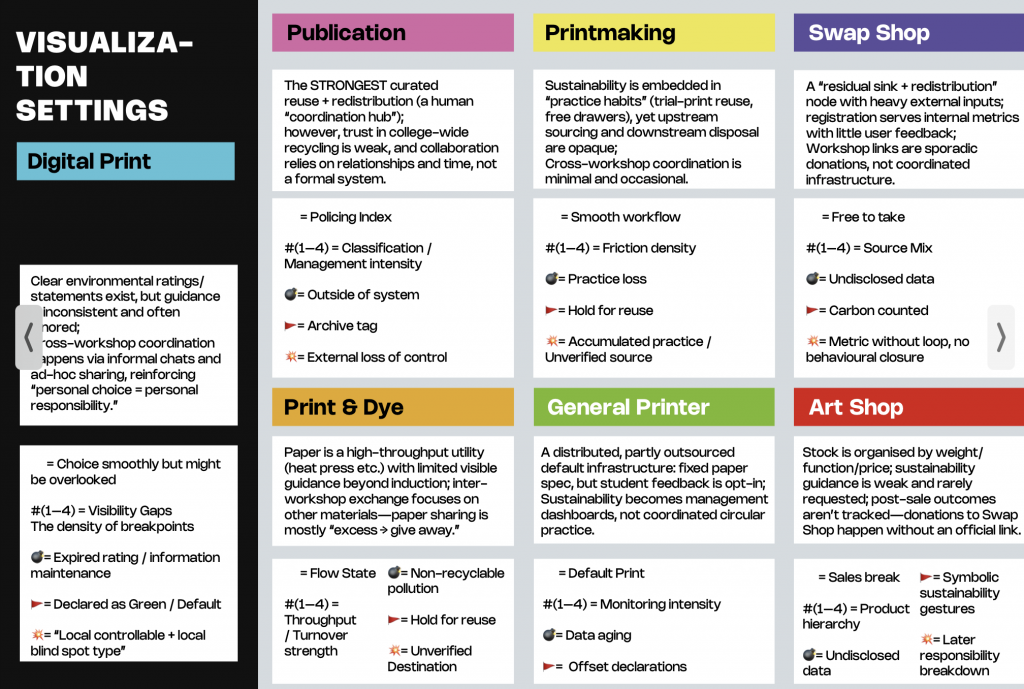

After the week 1 Friday presentation, Ruoying and I designed the questions for the student survey. She was in charge of most of it, and after I got the analysis of the student survey, I designed the specific questions for the survey of 7 departments (Digital Print, Printmaking, Publication, Print & Dye, Art Shop, Swap Shop, General Printer). The questions are based on my previous research and on the keywords: agency, paper lifecycle, cross-departmental coordination, and sustainability incentives.

(the recordings and emails will be privacy )

Core diagnosis

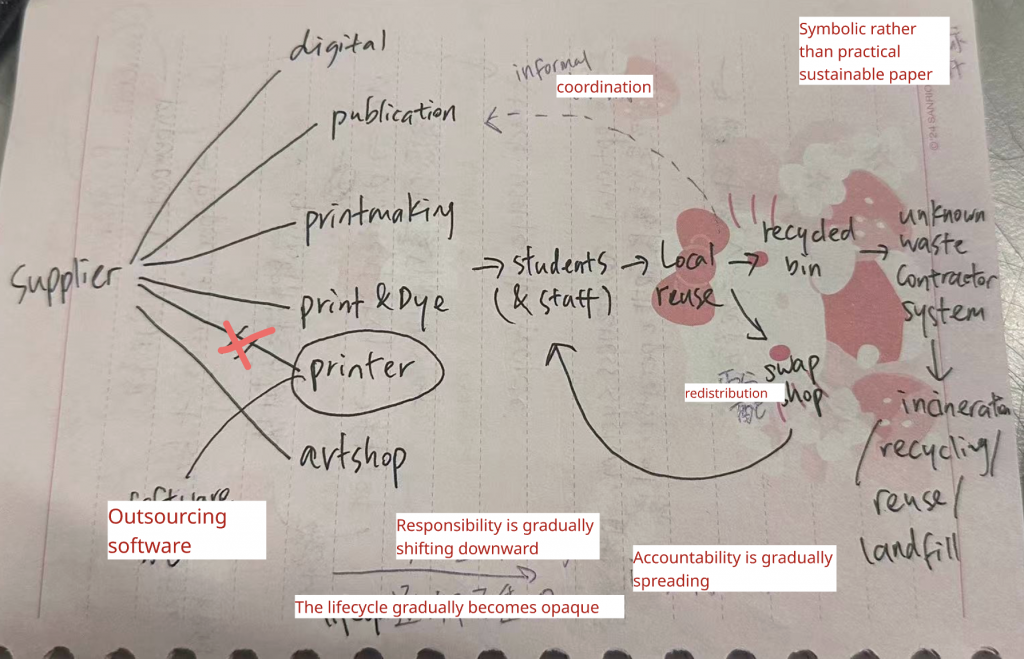

UAL’s paper sustainability system operates as a fragmented network of locally sustainable practices without a unified, institutionally coordinated circular infrastructure.

UAL paper sustainability system is characterized by:

Insight 1 — Lifecycle visibility collapses downstream

Paper lifecycle visibility decreases progressively. Final disposal stage is invisible even to technicians.

Insight 2 — Circularity exists locally but not institutionally

Individual workshops implement reuse practices. However, no centralized system coordinates material flow across workshops. UAL’s circularity is distributed and isolated, not systemic.

Insight 3 — Sustainability responsibility is progressively externalised

Accountability diffuses as paper moves through the system. No actor owns full lifecycle responsibility.

Insight 4 — Coordination operates socially, not structurally

Material redistribution depends on:

UAL’s paper network functions through interpersonal coordination rather than systemic coordination.

Insight 5 — Sustainability knowledge exists but is not embedded / integrated in decision-making

Material decisions are driven primarily by:

Insight 6 — Sustainability metrics operate symbolically, not operationally

UAL tracks environmental impact via Papercut and Printreleaf.

However:

Sustainability exists as a reporting system, not an operational system.

Insight 7 — UAL’s sustainability strategy relies on compensatory offsetting rather than preventative circularity

Carbon offset programs such as Printreleaf compensate for paper consumption after it occurs. They do not structurally reduce paper production, distribution, or waste.

Insight 8 — The paper system operates as a decentralized archipelago rather than an integrated network

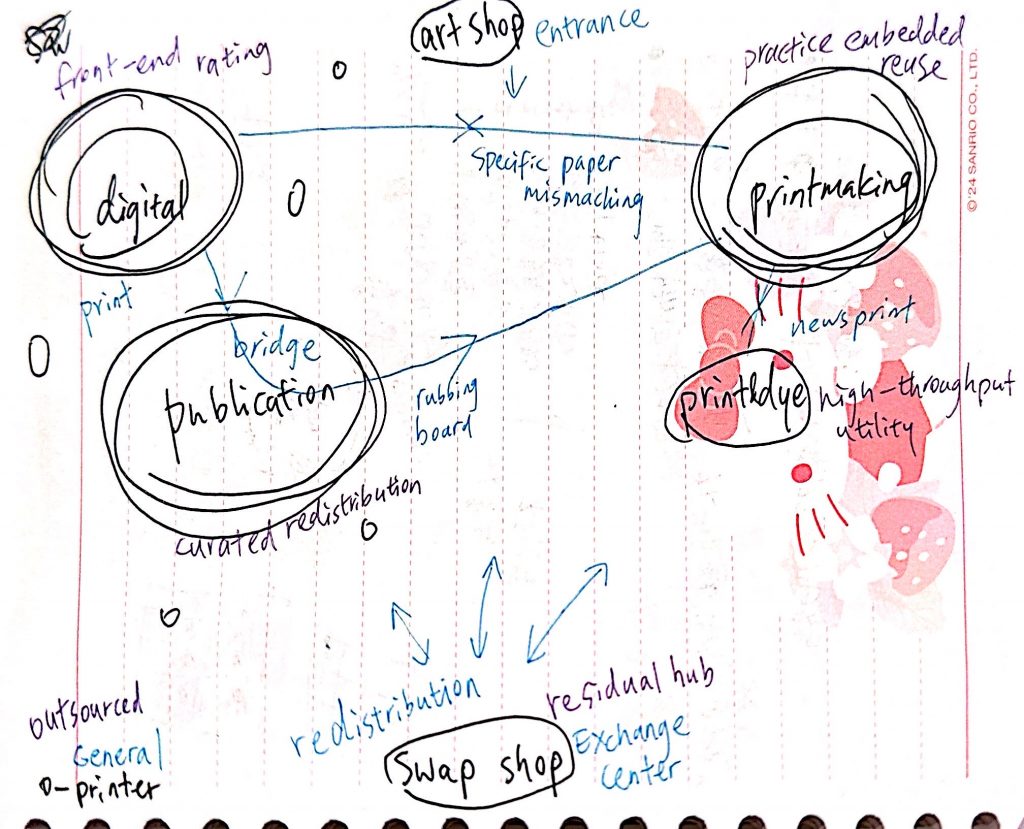

Each workshop functions as an isolated sustainability island with its own reuse practices. Material circulation between workshops is informal and inconsistent. No unified infrastructure connects these nodes into a coherent circular system.

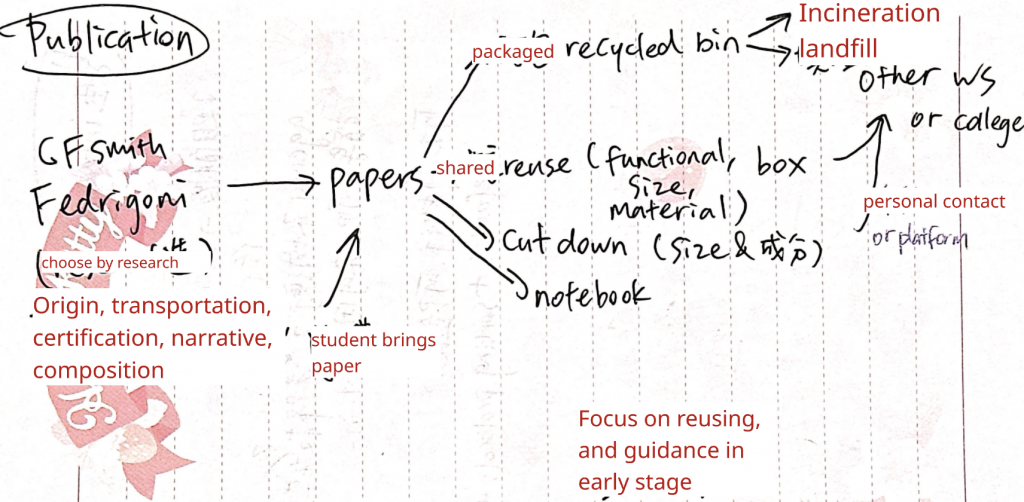

Then I integrated the workshop maps:

Departments as islands. My plan of Homepage structure:

my visual attempts:

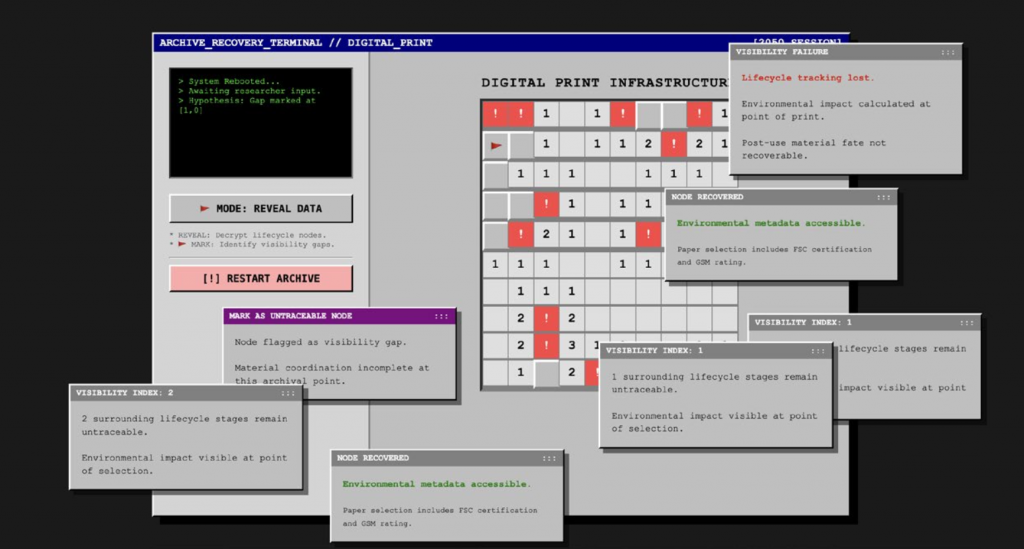

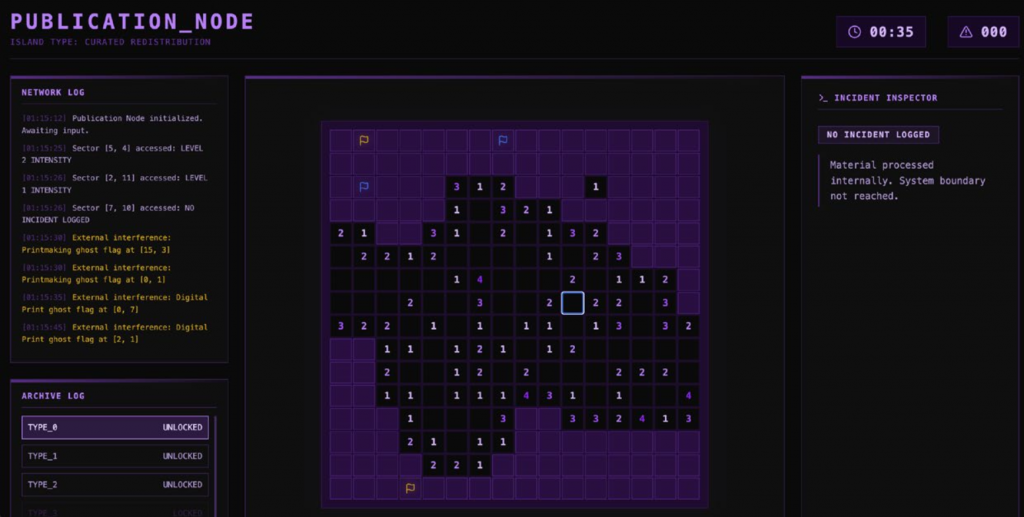

and I built our final outcome: https://sawp-shop.vercel.app/; https://print-making.vercel.app; https://digital-print-gamma.vercel.app/; https://print-dye.vercel.app/

Star’s The Ethnography of Infrastructure became a decisive lens for me after completing interviews with students and workshop technicians, helping me re-read the transcripts as evidence of infrastructure rather than isolated anecdotes. She argues that infrastructure is typically invisible when it works, and becomes legible “upon breakdown”: “the normally invisible quality of working infrastructure becomes visible when it breaks” (Star, 1999, p.382 ). This reframed recurring comments in our interviews—missing information, unclear disposal routes, procedural dead-ends—not as individual negligence, but as moments where the system reveals its own limits.

Meanwhile, Star notes that “nobody is really in charge of infrastructure,” and that it is rarely changed “from above,” but shifts through slow negotiation (Star, 1999, p.382 ). This gave me language for the accountability pattern we observed: without any actor holding end-to-end visibility, responsibility disperses along the paper lifecycle and is often personalised at the point of use and disposal.

Star also legitimised the “invisible work” I heard repeatedly from technicians—ad-hoc fixes, informal guidance, and constant patching that keeps workflows moving (Star, 1999, pp.385–386 ). Once I recognised how many variables shape coordination (budgets, time pressure, training, local norms, procurement constraints, signage, and interpersonal networks), proposing a single solution felt reductive. Instead, my position shifted toward designing an engaging interface that makes breakdowns, gaps, and responsibility-shifts experienceable—so the system can be discussed and negotiated, rather than prematurely “fixed.”

Ludovico’s account of post-digital publishing helped me name a core issue in UAL’s paper circulation: the problem is not a lack of communication, but a lack of communication as a usable interface. He argues that e-publishing becomes accessible through “new interfaces, habits and conventions,” and that its real power lies less in multimedia integration than in “superior networking capabilities” (Ludovico, 2012, p.153). This resonates with our interviews with students and workshop technicians: departments are not disconnected, but connected through informal, uneven channels—information exists, yet rarely becomes actionable at the point of decision.

Crucially, Ludovico observes that DIY print networks still “lack… mechanisms able to initiate social or media processes,” what he calls the “processual level” (Ludovico, 2012, p.154). I use this as a conceptual hinge to infer an accountability dynamic: when processual mechanisms and interoperable interfaces are missing, accountability does not consolidate at the system level. Instead, it diffuses along the lifecycle and becomes personalised, leaving individual users to “do the right thing” at the end of the chain—even when guidance is partial, embedded, or invisible.

Latour reshaped how I understand UAL’s paper circulation as a network of coordination and agency structured through visualisation and paperwork, rather than a simple information gap. He argues that in controversies the “winner” is often the actor who can muster and align the largest number of allies, and that inscriptions and classifications are not neutral representations but devices that stabilise claims as actionable facts (Latour, 1990, p.5). This directly informed my reading of sustainability metrics and departmental rules: they do not merely describe environmental impact, but distribute attention and actionability across the network—what becomes visible, to whom, and where, determines who is expected to take responsibility.

Latour’s discussion of “paper shuffling” further clarifies why accountability in our case study tends to diffuse. He frames the circulation and compression of documents as a key source of power, enabling distant people and events to become manageable on a desk (Latour, 1990, p.26). This helped me interpret our interviews with workshop technicians and students: the “hidden coordination web” and questions of agency emerge precisely where documentation breaks down, categories fail to align, or records become inaccessible—conditions under which system-level accountability cannot consolidate and responsibility slides toward individual users.

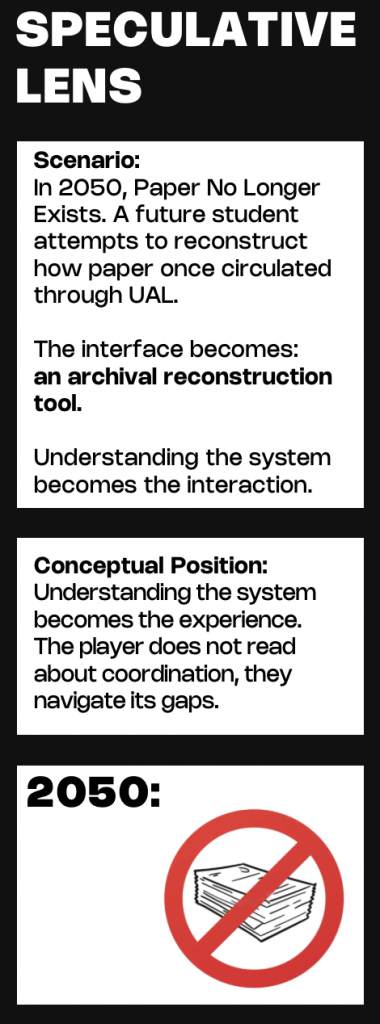

Dunne and Raby helped me reframe “the future” as a critical medium rather than a destination. They write that futures are “not a destination… but a medium” to think with, becoming critique when they expose the limits of the present (Dunne and Raby, 2013, p.3). However, the most useful contribution for my work is their treatment of preferable futures. They insist that “preferable” is never straightforward—preferable for whom, and who decides?—and note that it is currently shaped largely by government and industry, while public participation as consumers and voters remains limited (Dunne and Raby, 2013, p.5). This pushed me to read sustainability less as an awareness problem and more as a question of agency and decision-making structures: what counts as “better” is often already scripted through defaults, visibility, and control over choice. Their framework directly informed our final outcome. We used a paperless-future premise alongside a “future archaeology” stance, translating our research into a web-based game. By constructing—and deliberately hacking—its internal rules, we made systemic breaks in coordination and shifting responsibilities visible, inviting viewers into debate rather than presenting a solution.

Unknown Fields Division models design research as an expeditionary practice: travelling to landscapes shaped by extraction and global supply chains, and “bearing witness” to the infrastructures that sit behind everyday technological futures (Unknown Fields Division, n.d.). Their work then returns as narrative evidence—web pages, films, and objects—able to hold “dispersed narratives” together and make systemic relations graspable (Unknown Fields Division, n.d.).

This provided a concrete methodological reference for my project. Rather than treating research as data collection followed by a neutral report, Unknown Fields frames research as the construction of a narrative device: a designed sequence that helps viewers encounter hidden dependencies across a network. Their expeditions (for example, tracing lithium infrastructures “behind the scenes of our electric future”) demonstrate how distant sites of extraction become embedded in ordinary decisions and devices (Unknown Fields Division, n.d.).

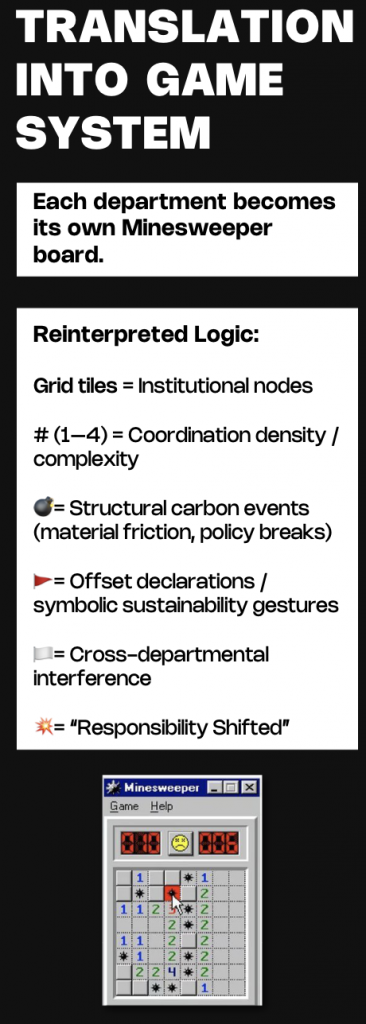

Following this logic, we treated our own investigation as a form of “future archaeology” and explored a more playful, disruptive mode of presentation—eventually developing an interactive web experience (a hacked Minesweeper-like structure) to make coordination gaps and invisible connections felt rather than merely described.

Tactical Tech’s The Glass Room demonstrates how an invisible system can be translated into a participatory critical experience. Rather than presenting data and privacy as abstract information, the project “aims to demystify technology through immersive, thought-provoking, self-learning exhibitions,” turning hidden infrastructures into something visitors can explore and question (Tactical Tech, n.d.).

A key tactic is its use of a familiar interface as a critical shell: the exhibition is staged as a sleek “tech shop,” where the retail language of product display is deliberately subverted—“nothing is for sale.”(The Glass Room, n.d.; Mozilla, 2017) This reversal shifts attention from individual “smart choices” or self-discipline toward the conditions that shape choice: defaults, visibility, and what is made legible at the moment of decision. In other words, the visitor’s confusion is productive—it becomes a method for recognising how systems guide behaviour while appearing neutral.

This strategy directly informed our own approach to representing a fragmented sustainability network. Instead of relying on explanatory messaging alone, we explored a game-like web interface that intentionally disrupts expectations: by reversing and “hacking” familiar rules (a Minesweeper logic where each screen behaves differently), players are pushed to navigate uncertainty, locate missing information, and experience how “truth” is unevenly accessible across a system. The Glass Room validated interactivity as critique: not a solution, but an encounter that makes structure felt.

Through this project I clarified my position as a practitioner: I am strongest as a researcher and framework-builder who translates messy institutional realities into legible structures. I led much of the inquiry by interviewing students and workshop technicians across departments, then synthesising their accounts into a speculative “future archaeology” of UAL’s paper circulation in 2026, informed by the university’s net zero plan.

The research revealed a system that can appear sustainable yet remains unevenly coordinated. As paper moves through its lifecycle—from supply chain decisions to use, waste handling, and recycling—key information progressively disappears (traceability, recyclability, carbon indicators). At the same time, responsibility shifts downward along that route and can become invisibly personalised: users are expected to “do the right thing” at the end of the chain, even when guidance is partial, embedded, or inconsistent. In this sense, accountability diffuses rather than consolidates.

Crucially, awareness and environmental knowledge do not automatically translate into behaviour: decisions are still shaped by price, convenience, timing, and what is practically visible in the moment. Our Minesweeper-inspired interface became my way to materialise this—making absence, misalignment, and responsibility-shifts experienceable, so the system can be questioned and negotiated rather than simply announced.